Why does OpenAI CTO make that face when asked about “What data was used to train Sora?”

The Puzzling Expression of OpenAI’s CTO: Unpacking the Question of Sora’s Training Data

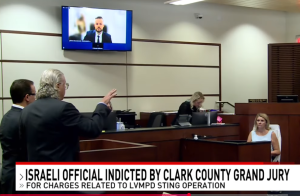

In the ever-evolving landscape of artificial intelligence, questions around the data used to train models are both crucial and complex. Recently, during an engaging discussion, the Chief Technology Officer of OpenAI, Mira Murati, found herself confronted with a pointed inquiry: “What data was utilized to train Sora?”

This simple question elicited a noteworthy reaction—one that left many observers intrigued and wondering about the implications behind that expression. It’s a moment worth exploring, especially considering the intricate relationship between data transparency and AI development.

Understanding the Context

Sora, as a model developed by OpenAI, raises important questions about the ethical and practical considerations of AI training data. The public’s curiosity about which datasets are leveraged for building advanced AI systems reflects a growing awareness of privacy and bias issues within the realm of machine learning. Murati’s reaction, which seemingly combined surprise and contemplation, signifies the weight of such inquiries.

Why the Reaction Matters

For AI developers and those involved in the industry, queries related to training data can often be laden with implications. They touch upon not only technical challenges but also ethical considerations surrounding proprietary information, data privacy, and the potential for bias in AI outputs. Hence, Murati’s expression can be perceived as more than just a response to a question—it’s a reflection of the multifaceted challenges facing AI developers today.

The Bigger Picture

The conversation surrounding AI training data is evolving, with increasing demands for transparency. As artificial intelligence systems like Sora continue to make their mark, stakeholders from developers to end-users are keen on understanding the foundational elements of these models. This includes not only the types of data used but also how it shapes AI performance and accountability.

In conclusion, Murati’s reaction serves as a reminder that the dialogue around AI training is vital and complex. As we delve deeper into the realms of AI technology, questions about training data will continue to thrive, prompting essential discussions about ethics, transparency, and the future of artificial intelligence. The expression on Murati’s face, while seemingly simple, invites a broader inquiry into the corporate and ethical responsibilities entwined with AI development.

Post Comment