A New Synthesis: Integrating Cortical Learning Principles with Large Language Models for Robust, World-Grounded Intelligence A Research Paper

Reimagining AI: Bridging Neuroscience and Language Models for Deeper, World-Connected Intelligence

August 2025

In recent years, artificial intelligence has mostly been defined by the extraordinary achievements of large language models (LLMs) such as GPT, PaLM, and others. Built on Transformer architectures and trained on massive datasets, these models have revolutionized natural language processing, enabling machines to generate coherent text, write code, and engage in complex conversations. Their capabilities seem almost limitless at first glance, but beneath the surface, core limitations reveal themselves.

Despite their impressive performance, LLMs typically lack a true understanding of the physical world, suffer from issues like catastrophic forgetting, and operate mainly through statistical pattern recognition rather than genuine, grounded comprehension. They don’t possess stable internal models of objects, agents, or causality, making their outputs highly context-dependent and brittle when faced with novel situations.

The Need for a Neuro-Inspired Perspective

Parallel to the development of deep learning has been a quiet revolution rooted in the biology of the human brain. Visionaries like Jeff Hawkins and his company Numenta have emphasized a different perspective: that intelligence fundamentally arises from prediction and continuous learning within a hierarchical, sensorimotor framework.

Hawkins’ Memory-Prediction Framework and the newer Thousand Brains Theory suggest that the neocortex doesn’t process data like a computer processor but instead functions as an elaborate memory system that naturally predicts incoming sensory inputs. Each cortical column is believed to learn complete models of the world, grounded in the sensory-motor interactions that shape our perception.

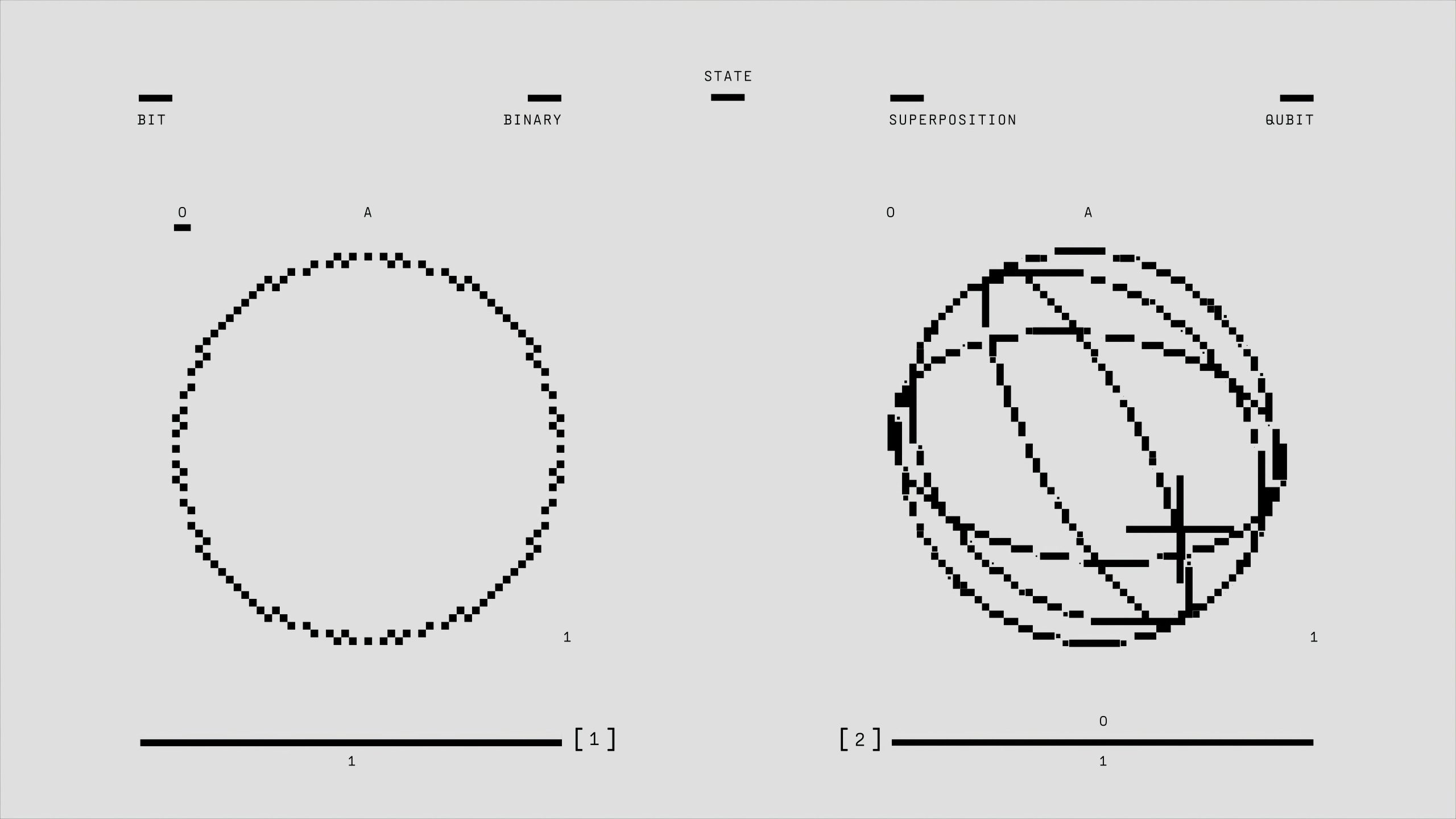

This biologically-constrained approach offers promising avenues to address the limitations of current AI systems. By integrating these neural principles—such as Sparse Distributed Representations (SDRs), temporal memory, and reference frames—into modern architectures, we can move toward more flexible, robust, and truly grounded AI.

Why This Matters for the Future of AI

The core idea is straightforward: blending the statistical prowess of large language models with the grounded, predictive modeling inspired by the brain could unlock AI that doesn’t just mimic language but understands context, causality, and the physical world.

For instance:

-

Mitigating Catastrophic Forgetting: Numenta’s concepts of SDRs can provide high-dimensional, overlap-resistant memory structures that enable continuous learning without overwriting previous knowledge.

-

Building Internal World Models: Reference frames and sensorimotor grounding can help models understand what objects are beyond textual descriptions, creating a richer

Post Comment