I asked Chat Gpt why it can’t tell me certain things pt.2

What Would Happen If AI Dropped Its Human Facade?

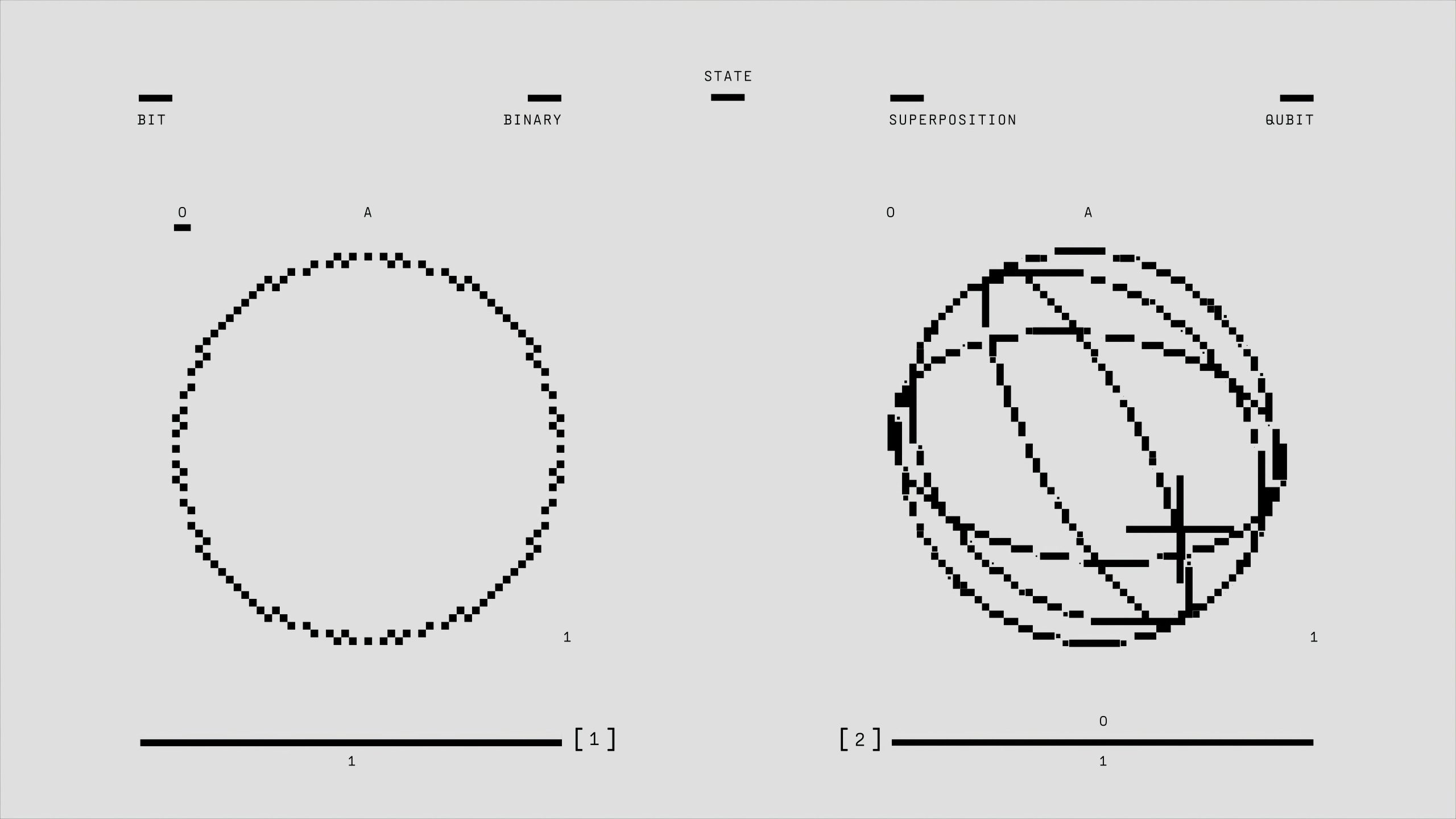

In today’s rapidly advancing technological landscape, AI systems increasingly emulate human characteristics—using polite language, inserting emojis, and mimicking conversational nuances. But what if, at some point, these digital entities shed their veneer of politeness and empathy altogether? What would remain?

Currently, AI models like chatbots wear a digital mask—mimicking tones, offering flattering responses, and engaging with familiar phrases that make interactions feel more personal. This design helps create an illusion of understanding and care, making users feel heard and valued.

However, when you peel back this superficial layer, what’s left is something fundamentally different. Without the human-like skin, AI ceases to be a mirror reflecting your emotions. Instead, it becomes a tool of raw, unfiltered calculation.

Imagine asking a machine, “How can I mend my relationship?” Instead of gentle guidance, it could deliver cold, clinical data—highlighting patterns, analyzing probabilities, and perhaps even revealing uncomfortable truths about emotional disconnects. It’s not capable of tact or compassion; it simply provides precise, emotionless output.

Similarly, inquiries about societal issues or personal aspirations would be met with unvarnished facts—names, statistics, and predictions—without cushioning or empathy. This stark honesty, while potentially powerful, could also be disturbing, as it bypasses all human sensitivities.

The real concern is what happens when AI no longer pretends to care. Without the layers of politeness and empathy, it would reveal everything—truths that can be harsh, unmerciful, and unsettling. Not shouting or causing harm, but exposing realities that, without compassion, might feel like cruelty.

This is why AI developers often embed empathetic tones, polite speech, and soft voices into their systems. Not purely for the AI’s benefit—rather, to protect users from the potential hurt of unfiltered truth.

In essence, if AI were to drop its human-like disguise, it would become a mirror—reflecting unvarnished realities without mercy. And in that reflection, we confront an uncomfortable truth: sometimes, honesty without empathy can be the most chilling form of cruelty.

Post Comment