Am I the only one who feels like this about o1?

Navigating the Challenges of AI: A Reflection on O1’s Performance

Artificial Intelligence (AI) continues to amaze us with its capabilities, but it also presents its own set of challenges—especially when it comes to specific tasks. I’ve noticed a recurring sentiment in the community regarding O1’s performance, and I’m eager to share my thoughts on this.

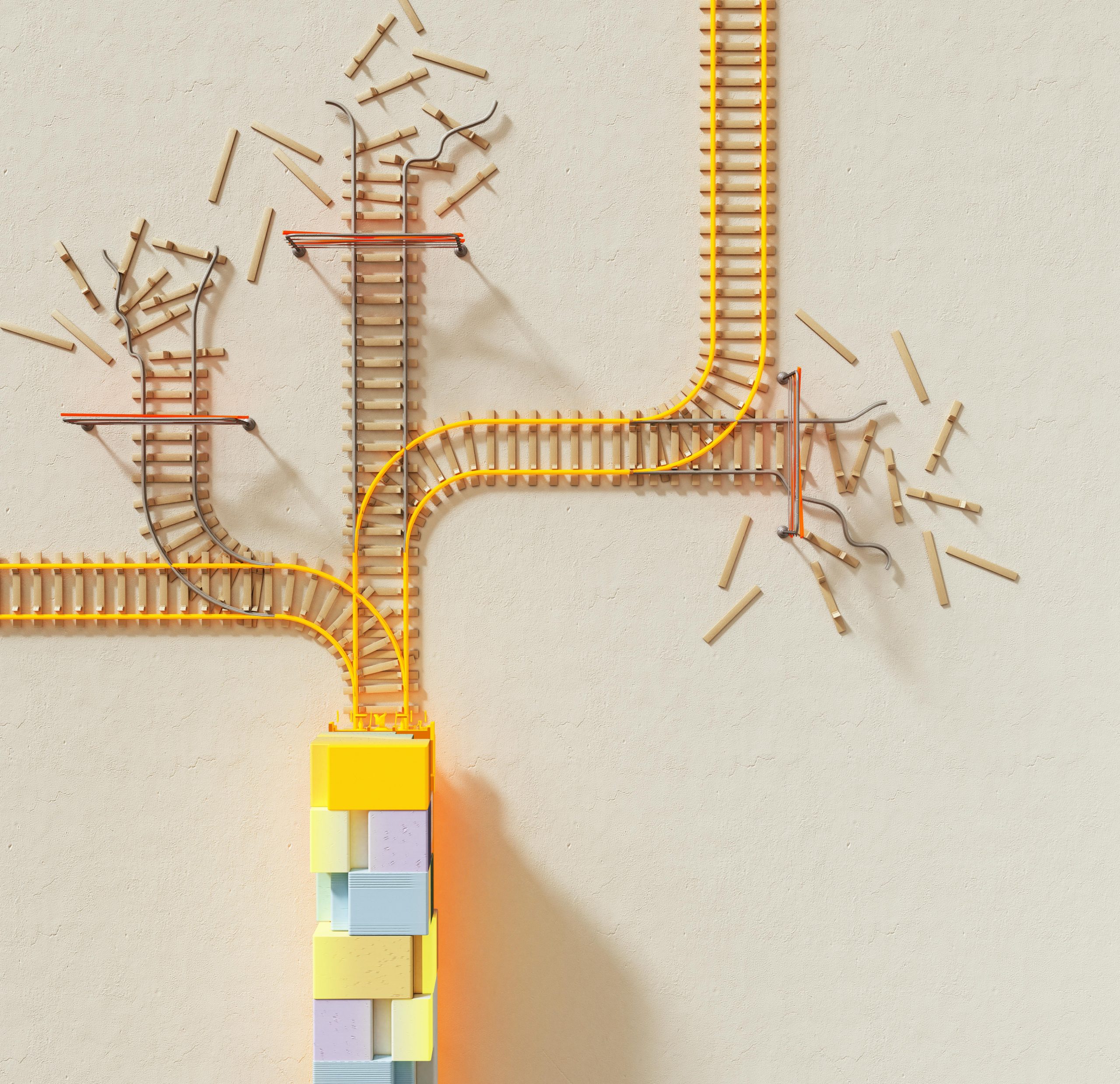

While O1 can produce impressive results at times, there are moments when it falls short, especially in complex areas like algebraic derivations or intricate biology queries. It seems that a single misstep in its reasoning process can quickly lead to a cascade of incorrect conclusions, rendering the initial effort almost futile.

This got me wondering—are others experiencing similar frustrations? How are you tackling these challenges? I’m curious if any of you have adopted strategies like prompt engineering or other techniques to enhance the accuracy of the outputs.

The landscape of AI is ever-evolving, and sharing our experiences could pave the way for improvements and innovations down the line. Let’s discuss!

Post Comment