Why LLM’s can’t count the R’s in the word “Strawberry”

Understanding Why Large Language Models Struggle to Count Letters: The Case of “Strawberry”

In discussions about the capabilities of Large Language Models (LLMs), a common point of curiosity is why these models often stumble when tasked with simple problems like counting specific letters in a word—such as the number of “R”s in “Strawberry.” This recurring confusion offers an insightful glimpse into how these advanced models process language.

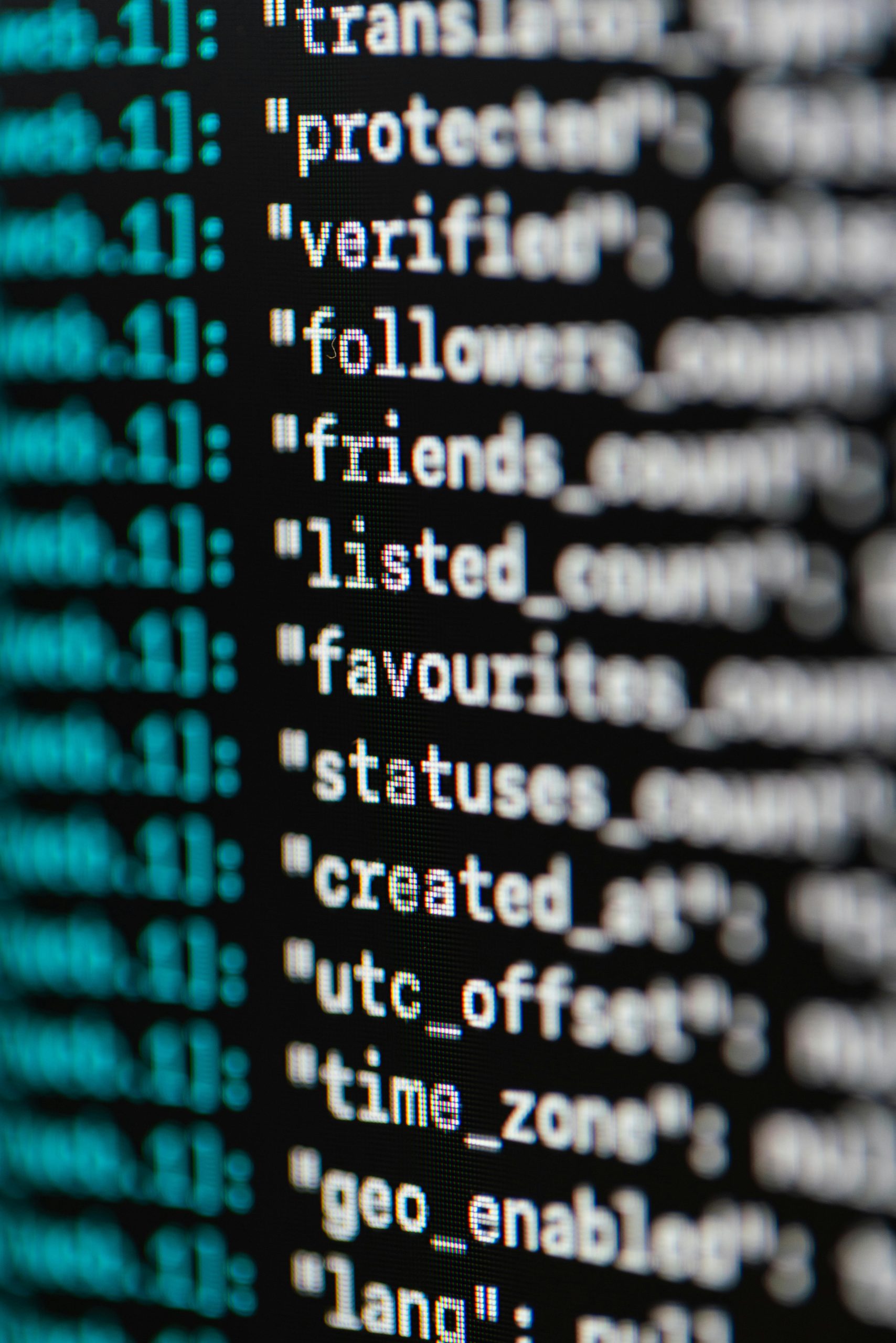

The Inner Workings of LLMs

At their core, LLMs operate by converting raw text into a series of smaller units known as “tokens.” These tokens might represent words, parts of words, or even individual characters, depending on the model’s design. Once tokenized, each piece is transformed into a numerical representation called a “vector.” These vectors are essentially high-dimensional arrays that the model processes through multiple layers to generate meaningful responses.

Why Counting Letters Is Not Directly Achievable

One key point to understand is that LLMs are primarily trained to understand and generate language based on patterns in large datasets. They are not specifically trained to recognize or count individual characters within words. As a result, the token-to-vector conversion process does not preserve exact character-level details. Instead, it captures the contextual meaning and relationships between words and phrases.

This means that the precise count of a particular letter within a word, such as the number of “R”s in “Strawberry,” isn’t inherently stored within the model’s internal representations. Hence, when asked to perform such a task, the model may often produce incorrect answers—not due to a lack of intelligence, but because the underlying architecture isn’t optimized for exact letter counting.

Visualizing the Concept

For a detailed explanation, including diagrams that illustrate this process, you can visit this resource: https://www.monarchwadia.com/pages/WhyLlmsCantCountLetters.html. While images cannot be embedded here, the article offers valuable insights into the limitations and functioning of large language models concerning character-level tasks.

In Summary

LLMs excel at understanding context, generating human-like text, and recognizing language patterns. However, their design does not inherently support precise character-level operations like counting specific letters within words. Recognizing this distinction helps set realistic expectations for what these models can and cannot do effectively.

*Interested in learning more about the inner workings of AI and language models? Stay tuned for further

Post Comment