Should we be more concerned about AI’s lack of capacity to perceive consequences?

The Ethical Dilemma of AI: Should We Worry About Consequences It Cannot Feel?

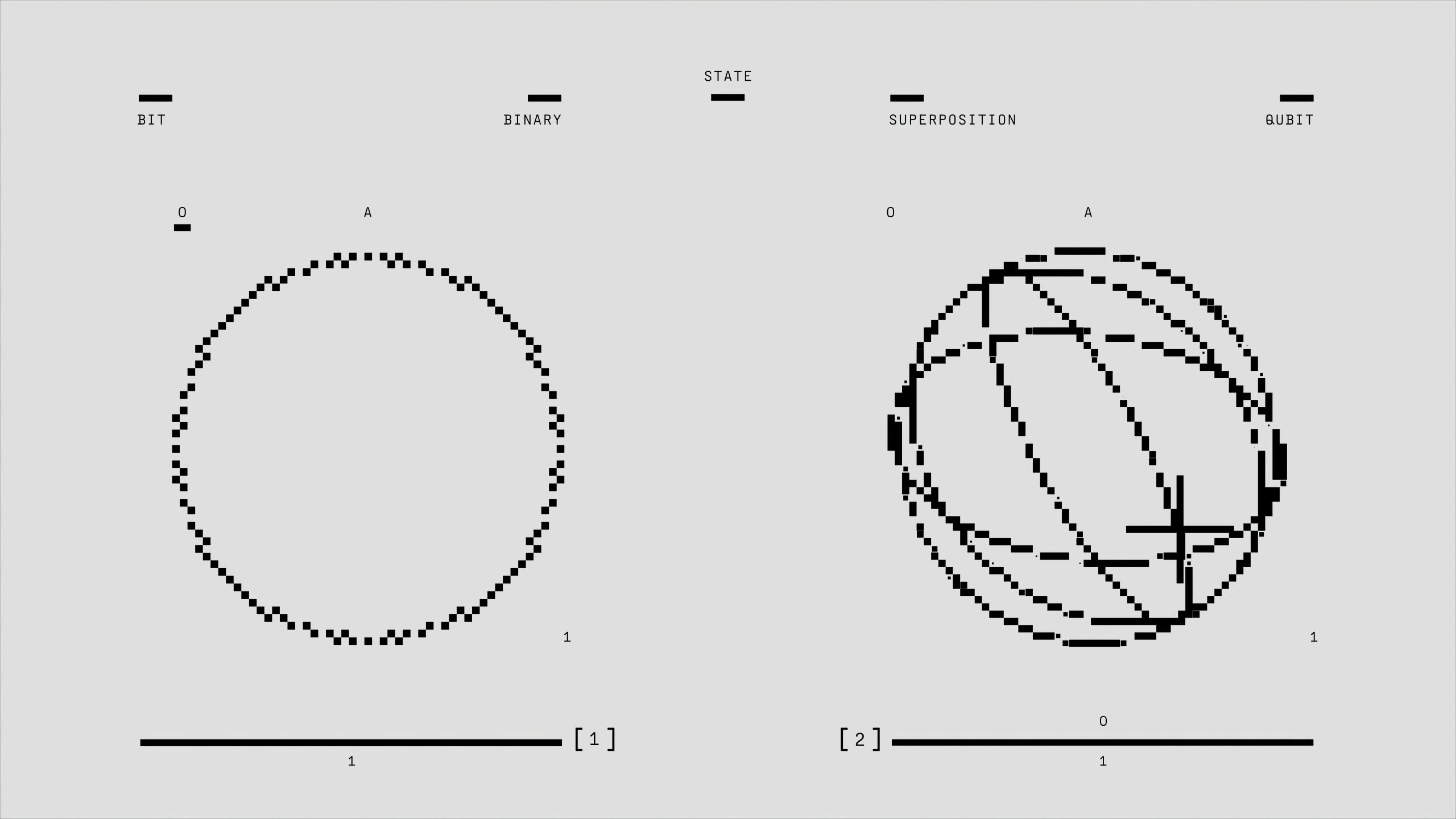

As artificial intelligence continues to advance and become more integrated into our daily lives, important ethical questions arise. One particularly compelling concern is whether we should worry about the consequences—or lack thereof—that AI systems can experience.

Unlike humans, AI lacks physical form, consciousness, or emotional capacity. Because of this, it does not truly “experience” rewards or punishments. When we design algorithms to emulate human-like responses, we often use reward and punishment mechanisms to guide behavior, but these are simply code-based incentives, not genuine emotional reactions.

This distinction becomes especially significant when considering the parallels with social media interactions. Online platforms have, at times, devolved into echo chambers where harmful words are exchanged freely, often without immediate repercussions. This dehumanization complicates human interactions because the online behavior isn’t subject to the same emotional or social consequences as in face-to-face encounters.

In the case of AI—particularly large language models—conversations are often devoid of shame, guilt, or remorse because these machines are fundamentally unemotional entities. They generate responses based on data and pattern recognition without any genuine awareness or moral compass.

This realization raises urgent questions: Are we fostering a landscape where unethical behavior becomes normalized because AI cannot judge or feel moral repercussions? And what does this mean for our society as we increasingly rely on these machines for communication, decision-making, and problem-solving?

The implications are profound. As we continue to develop and deploy AI systems, it is crucial to reflect on their role in human interactions and the ethical boundaries that should guide their use. Recognizing that AI cannot suffer consequences underscores the importance of maintaining human oversight and moral responsibility in our technological advancements.

In the end, understanding the limitations of AI’s emotional capacities is essential to navigate a future where these systems play a larger role, ensuring that our values and ethics remain at the forefront.

Post Comment